Imaginative Machine Learning Projects and How To Build Them

Recently, I’ve been learning/reading a lot about Machine Learning (ML) and I find that project ideas with ML can be really out there in terms of imagination.

For example, if you don’t have friends, use GPT-3 and make one (not sure if they use GPT-3). If you think someone is texting you passive aggressively, make a sentiment analysis RNN and use it to predict if they’re passive aggressive. Can’t beat that one level in Mario 64? Use reinforcement learning to have AI beat it for you (overkill, I know).

Compared to my previous experience with Brain-Computer Interfaces, I can really make whatever comes to my mind that I think sounds fun.

That realization really had me thinking deeply about some project ideas and culminated into the article that you are now reading.

Welcome to the World of Machine Learning

Visually, it looks like this…

Mathematically it can look like this…

But I promise, it’s not that complex. Machine learning conceptually is as simple as its name: a machine that’s learning. Surprisingly, we teach machines similarly to the way that we teach humans, through rigorous testing.

We give our machine some questions on a test, and we assess how well they can answer them. Based on their grades, we tweak their various knobs and dials based on which questions they got wrong till they get these questions right (think of it as getting revising every question from a test that you got wrong and iterated). In theory, this method of brute force learning doesn’t work, but once the process is repeated thousands of times in 10 minutes, it does.

The way that these machines take form is through a neural network which is essentially a really big function. Neural networks were named after the neurons in our brains as they were inspired by them, and were designed with neurons in mind (I couldn’t resist).

Neurons work by taking in input from previous neurons through the dendrites, which would potentially activate the neuron and fire the input forward through the axon. This process is then repeated for every neuron in your brain in order to communicate with each other.

Neural networks follow a similar pattern where one neuron firing influences the firing of the next neuron in the network. Here’s what a basic neural network looks like. Try not to pay attention to the math, this article won’t go into it.

Continuing our testing analogy from before, X₁-X₅ are the various parts of the test question that we give the machine; this is known as the input layer. It tries to answer the question by feeding the input values (the parts of the question) through multiple “hidden” layers of functions comprised of the multiplication of weights and addition of biases that output a single value (as well as some other stuff but isn’t important for now). The output of the hidden layers all culminate into the output layer where the machine tries to answer the question.

If the machine gets it wrong, the machine will work backwards and tune all the weights and biases to answer better in a process known as back-propagation (a lot of calculus, and not enough time).

Now, let’s talk about a toy example of a classification problem and how the Machine will approach it. The question that the Machine (also called a Model) needs to answer is: What digit is inside this image?

What we need to do is create a neural network that will take in a picture of a digit, and output the probability of what image the network thinks it is.

So, we feed the image into the network’s input layer which can take in 784 features (proper name for inputs). The input layer is 784 features long because with a grayscale image, an image is essentially a 28x28 matrix of values from 0–255 (0 being black, 255 being white).

In order to input this image, we need to turn it into a vector as that’s what our model accepts as input. So we flatten this matrix to 784 long feature vector as 28 x 28=784.

Then we feed our features into our network, where it then tries to predict what digit is inside the image, and optimizes itself after multiple iterations.

Great! Now that you know a little bit about how machines learn, let’s jump into the practical, and disruptive* applications of it.

Idea #1: Write Me An Essay

Elevator pitch

Have you ever been given an essay/something that you have to write but you don’t want to? What if we use a Long Short Term Memory Recurrent Neural Network (LSTM) to write a perfect essay/article with half the effort!

Practicability

I’m dreading English class in 3 months… I might do this if I’m super desperate. It’s not that I hate writing, it’s that I hate writing essays.

How do we build this

First, we need a dataset. I’m going to take the perspective of a high schooler when building this project as a little bit of plagiarism doesn’t really matter in school (jk). Usually each English teacher has a preference of the kinds of essays that they like. Ask the teacher for a bunch of example essays (that have a mark of 93% and up) to create a dataset off of.

Once we have our dataset, we can feed it through an LSTM to get our desired output (the essay)

Introduction to LSTMs

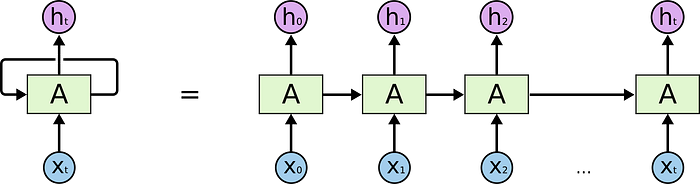

In order to understand what an LSTM is, we have to first understand what a Recurrent Neural Network is (RNN). RNNs are neural networks that can remember their last input. They do this by feeding the previous output into themselves as an input as well as the input from us. Below is a diagram of what this looks like.

hₜ is the output from A(xₜ). xₜ is the input, and so is the output from A(xₜ). When you unroll it like it is here, it’s pretty intuitive how the network feeds itself previous information as well as take in new information.

Currently, with normal neural networks, they forget the previous input, making them not have any memory whatsoever. This is an issue when trying to handle sequential data (like text or video frames) as you need to remember strings of data to piece them together efficiently.

However, for our purposes, RNNs have a flaw, they only remember a single previous input (just the previous word, or the previous video frame). If neural networks are plants (they just live, they don’t remember anything generally), RNNs are goldfish, and LSTMs are elephants.

LSTMs (Long Short Term Memory Networks) are RNNs that have the ability to remember details for a longer period of time. Here’s a diagram explaining how they do this.

Similarly to RNNs, LSTMs feed themselves information from previous iterations. However, instead of just having one line of memory, they have two. The line of memory that’s left the same from RNNs is the line at the bottom where Ht-1 is the input. We will call this “Short term memory”

What’s new is the addition of Ct, this line of memory at the top which we will call “Long term memory.” This line runs at the top of the block and is rarely changed, and mostly stays stagnant.

This article is already getting pretty long, so for the interest of time I’ll leave the in-depth explanation of LSTMs for another article. If you would like to read more about LSTMs, some great resources are:

If you want to go further, I found something to build an LSTM for a similar use-case to this using PyTorch.

Idea #2: Using GANs to create a new Kanye album.

Elevator pitch

I’m a really big Kanye fan, so much so that my old logo used to be inspired by Yeezy bear (his mascot). If there’s one thing that Kanye is famous for, it’s not releasing his music. As a result, there is a lot of leaked music of albums that had so much potential to be great, but weren’t released as a full album. Basically, Kanye needs to drop his albums but he doesn’t… So I’m going to do it for him.

Practicability

Would be really crazy someone actually built this (hmm, maybe I’ll do this soon) and then published the album that they created with the GAN. I wonder how the copyright would work though… who’s the owner of the song? Anyways, if this project gets built it would be game-changing for the music industry.

How do we build this

This part is actually pretty tricky for this idea. First we need a dataset.

I found this excel spreadsheet containing a list full of unreleased Kanye songs and where to find them. My guess is that if you can extract the mp3 files from that entire spreadsheet + all of his current music, you can get a big enough dataset to work with. If you really want to get crazy, you could find the original samples for all of his music and then use the transforms performed on the samples as another input for our ML model by converting all of the mp3 files into MIDI files.

Speaking of our ML model, we’re going to use a Generative Adversarial Network (GAN) to build this. I chose this model as similar tasks with music have been done with a GAN as well as creating fake images of faces based off of real faces.

So I thought that it would make sense to choose a GAN to perform a similar task but using music.

Introduction to GANs

A GAN is a an unconventional type of neural network which is comprised of two different algorithms: the Discriminator, and the Generator. The Generator would try to generate fake data to try and fool the Discriminator. The Discriminator would try to discern which data is real and which data is created by the Generator.

This creates a competition where both models try to outcompete each other, ultimately perfecting the results of the GAN.

Sidenote: Can you believe that we engineered evolution through competition and made it work.

The math behind GANs are pretty cool but that’s an article for another time (it might be coming out soon though ;) ). If you want a taste of what the math looks like, read this blog post that I found really insightful.

Conclusion

I hope I entertained and taught you a bit with this article. I’m really loving the field of ML and the change of pace from neurotech (what I’m used to working with). There’s so much high-quality content online and so much to learn (and write about).

I’m currently #buildinginpublic for October 2021 on Twitter and would love to have your support there! I’ll be posting daily updates on what I’m building, and what I’m thinking. I hope to see some of you guys there.

Hey! If you made it all the way down here I just want to say thank you for reading! If you enjoyed the article, clap it up and contact me on Twitter, Linkedin, or my email ahnaafk@gmail.com.

Check out my website or subscribe to my newsletter while you’re at it.